I’m always looking for more productive ways to work with Power BI and associated tools. As I covered in my article last week, development of Power BI reports using SharePoint for your file storage can be so slow that it is impractical. In this article I discuss the various options to speed up the process, plus I then show you how to develop your Power BI workbooks so they use a local PC copy of the files on your HDD (which is relatively fast), then switch to the SharePoint source when you are read to publish online.

Options to Improve the Power BI Authoring Experience using SharePoint File Storage

There are quite a few approaches to improving the Power BI authoring experience when using SharePoint as your file storage. Options include

| Approach | Pros | Cons |

| 1. Sync the SharePoint files to a network connected storage location using OneDrive. Develop the Power BI report to point to this local file storage instead of SharePoint. | Relatively fast to develop. | Files are still on a network drive, so not as fast as local files.

Need to install a gateway when you otherwise may not need one. |

| 2. Use Web.Contents() to directly access the files as you would a web page. | Reportedly faster to load a file (I’ve never tried it). | I assume it doesn’t work for file combine. |

| 3. Use Sharepoint.Contents() instead of Sharepoint.Files() | Reportedly faster to load a file (I’ve never tried it), but can still be slow to navigate. | I assume it doesn’t work for file combine. |

| 4. Use Dataflows to map the raw file, then load to PBI from there. | Moves the problem to dataflows so you can then develop quickly from a tabular source. | Requires an additional level of refresh scheduling that needs to be aligned for it all to work. It’s still good, but. |

| 5. Develop on local file storage but deploy with SharePoint storage | Best of both worlds | Requires some manual editing of queries prior to deployment. |

There may be other approaches I haven’t covered.

Develop Using Local Files but Deploy With SharePoint

There are benefits in each of these approaches as covered below.

| Storage Type | Benefits | Issues |

| Local File Storage | Accessing the files is the most responsive, particularly during development and file combine tasks. | You don’t get access to all the benefits of SharePoint as your file storage solution. |

| SharePoint File Storage | Inbuilt version control

Check in and check out Ease of access to multiple users geographically dispersed around the globe. The ability to access the files from Power BI without the need for a gateway. |

The development can be slow because the refresh and preview process can be slow. |

The solution I now prefer to use (and that I am going to explain in detail here) is to develop your Power BI Reports using local files, then manually switch to SharePoint files prior to deployment. Doing it this way gives you the best of both worlds. To do this, I configure the report to connect to both a local folder and SharePoint folder in Power Query and toggle between the two depending if I am developing or deploying. But there are a few things you need to do in the process to make sure everything works properly. The file path structure for SharePoint is different to the file path structure for local files, so you need to use some caution.

Walked through Demo

To demonstrate the concept, I will show you how I went about doing a file combine using my approach.

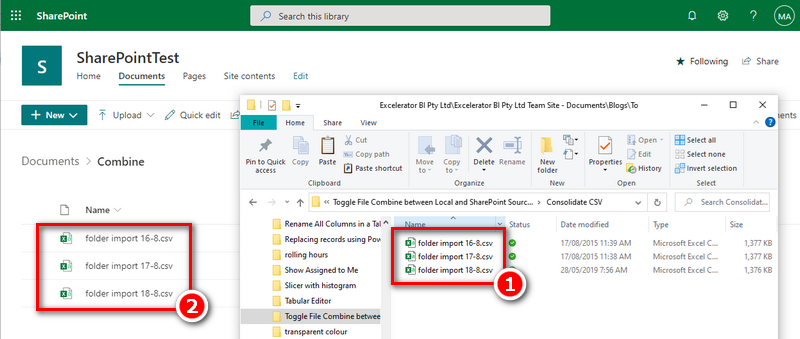

I have 3 simple csv files. I have loaded them into a local folder on my PC (#1 below) and also onto a SharePoint folder (#2 below). Note, I went ahead and added some extra files too so I could demonstrate the filtering process (this is particularly important). Also note, the folder path on my PC and SharePoint are not the same, but I do have one sub folder that is the same – the “Consolidate CSV” sub folder containing all my files.

Copying the Files to Your PC

Now there are a few ways you can get a copy of the files from SharePoint onto your PC. The easiest (and my recommendation) is to use OneDrive to Sync the folders you need. That way your PC files will stay in sync with the SharePoint files and the sub folder structure will be the same. But you can also set up a local folder on your PC and simply place the files you need there manually. If you do it this manual way, I recommend that you use at least the lowest level subfolder name so it matches the lowest level SharePoint Sub Folder name exactly (spelling, spaces and capitalisation). This is needed later in the process to ensure that you are swap easily between local files and SharePoint files. It is not mandatory that you replicate the sub folder structure from SharePoint exactly, as long as you can identify your files using at least one sub folder.

Start with the Local PC Version of the Files

This approach is designed to make the development effort as fast as possible. It therefore follows that you should start the process using the local HDD version of the files.

The generic pattern to follow is:

-

Connect to your local master folder on your HDD.

- Turn off “enable load” and call it RawLocalFiles.

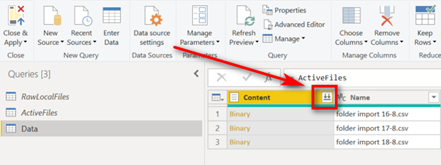

- Create a new query referencing RawLocalFiles and call it ActiveFiles and turn off “enable load”.

-

Create a new query referencing ActiveFiles and carry out the transformation you need (I will be doing File Combine in this demo).

- Make sure the query in step 3 does not have any absolute references to anything related to the local file path.

- Repeat for as many files as you need to process.

- Connect to your SharePoint master folder.

-

Turn off “enable load” and call it RawSharePointFiles.

- Make sure the list of files looks identical to the list in step 2.

- The sub folders can be different as long as there is at least 1 sub folder than you can use to uniquely identify the files you need (or a file name).

- When you are ready to deploy, manually edit the query ActiveFiles in step 3 to point to RawSharePoint Files.

In this demo, I used Power Query file combine to connect to my local HDD folder and combine the files. For those who are new to the Power Query Combine feature can read my article here.

Connect to your local master folder on your HDD

- Use Get Data\From File\From Folder

-

Browse to the main (top level) folder containing your source files on your PC.

- In my demo this will be a top level folder containing my file combine folder and some other files (to demonstrate the point). In your case, just connect to the top level folder of the files that you need to use. It is easiest if they are all in sub folders beneath that.

-

Click Transform Data – don’t click Combine yet.

- I turned off “enable load” and called it RawLocalFiles.

Note the shape and structure of the table above – I will refer you back to this later.

Create the ActiveFiles Query

- Right Click on RawLocalFiles and select Reference

- Rename it ActiveFiles

- Turn off “enable load”

Use Your Files as Needed

In my demo, I am going to do a File Combine.

- Right click ActiveFiles and select Reference

- Rename the query and call it “Data”

-

Filter the files so you are only referencing the files you need (for file combine in this case). In my case I filtered on the Folder Path, selected Text Filters, Contains, and typed the name of the sub folder I need called “Consolidate CSV”.

- It is essential that you do not use any backslashes in your filtering. If you need to use backslashes to get to the correct sub folder, I will give you a bonus trick and the end.

- Click the File Combine Button

Then I ended up with this.

Before moving on, I am just going to do some tidying up by grouping my queries together, like shown below.

Remove references to anything related to the local file path

This is critically important, otherwise everything will fail. I manually checked every single step in every query to ensure there were no references to the file path on my PC. It will depend on what you are doing. If you are referencing a file by name, it is normal for Power BI to add a named reference to the folder as well as the file name. If this happens, you must remove the folder reference.

The RawFiles queries can and should have absolute reference to the sources, but these are the only ones.

Connect to your SharePoint master folder

I created a new source, from SharePoint Folder. Note you must use only the URL part of the SharePoint folder as shown below, not the full path to the document library.

Power Query will show all the files in all of the document libraries on this SharePoint site. It is most likely you will not want them all. So next, I went to the Folder Path column (shown below) and filtered to the Sub Folder I needed called “Some Folder”.

At this point – stop and take note. The list of files below is almost identical to the list of files on my local drive shown earlier. But the folder structure is different on SharePoint and my local PC. This doesn’t matter. All that matters is that from here on, you only use relative references to your files by either using the name of the files (without reference to the folder) or buy using Contains Text as covered earlier, making sure you don’t reference backslashes or slashes.

I renamed this query as SharePointFiles and set it not to load.

Now there are two source folder queries, one called RawLocalFiles and one called RawSharePointFiles. Both queries present the same list of files to be processed.

Change the Source Before Publishing

So that’s it. You can now develop to you heart’s content with highly responsive queries. When you are ready to deploy, just edit the single line of code in the ActiveFiles query (1) so it doesn’t point to RawLocalFiles (2) but instead points to RawSharePointFiles (3).

Use a Parameter to Toggle?

Now at this point you may be thinking that it would be neat if you could configure a parameter to toggle between both. I agree – great idea. But in my testing I couldn’t get it to work. I tried creating a parameter and then a few ideas such as an IF statement to toggle the file source depending on the parameter value. It was all going swell until I published the report. Once it was published, PowerBI.com reported that I needed a gateway configured for the local files, even though they were never going to load. If anyone can work out a way to configure this with a parameter without needing the gateway, I would love to know about it.

Changing Slashes to Backslashes

As a bonus trick, you can actually replace the slashes in the SharePoint File path with backslashes. If you do this, you can use a filter on Text, Contains that uses a more absolute folder path, like “\main folder\sub folder\combine csv” for example. You need to flip the slashes in the SharePoint list for this to work.

What do you think?

Tell me what you think in the comments below. If you have a better way, I would love to hear about it. Especially if you know how to fix that parameter thing so you can use a Parameter to toggle the source without configuring the gateway.

Your article helped me a lot, is there any more related content? Thanks!

Your article helped me a lot, is there any more related content? Thanks!

As long as there is any Quary that enumerates the local dive, the PBIX can’t be published to the service, even when you employ an “IF” statement & a parameter to switch the sources.

So the trick is to convert the Quary that contains the local source to a “function” (just remember to delink the function you created using properties) & then delete the original Quary.

Call the function in a “IF” statement in place of the local quarry table. The “ELSE” being the SharePoint quarry.

This way the table listing the local drive does not activate till the condition is true & a connection is not created until the parameter wants it to.

while set to SharePoint the ‘IF” statement skips the function (for local) so the resultant table is not created & hence will not be part of the publish.

No gateway needed & You can publish without any errors. No need to open the quarry editor either, pick the parameters & refresh before you publish & then simply switch back to local & refresh again.

Continue to work on Local as it helps in faster preview with no internet latency during development & when you are ready to publish, switch to SharePoint as source refresh & publish.

Very interesting. So I think you are saying that if the Queries are inside a function, they will not be called unless the IF statement is true, hence it eliminates the issue of having to manually change these settings. I will certainly test this out.

Matt, we are having an odd problem where it seems that running these queries while it is stored locally through one drive is filling up our hard drive storage. We think it might be storing data temporarily locally and filling up space. Problem is, it doesn’t seem to lose the data after running, and we can’t find it anywhere. We have scoured through all the files locally, including the temp folder. Unfortunately, we can’t seem to find where this data is going. If you have any ideas please let me know.

I’ve never heard of this, but I assume it is possible. But surely the space is returned once the task is done. I seem to recall Chris Webb talking about “paging” occurring in PQ under certain circumstances, but it is a vague recollection. The tool I use to check disk space is WinDirStat – it’s fab. I use this to find failed PBI sessions that need to be deleted, so it may help if there are remnant files left behind. https://windirstat.net/

I contacted Chris Webb. He suggested checking out the cache settings under options and settings\options\global\data load. Certainly clear the cache, but you could also experiment to change the max cache size.

Matt,

I work on various desktop systems and have others who also work on various Desktop(local files) and of course we’ve tried many various ways to deal with Local paths (my user path, vs my team mates etc).

Recently, I started looking into Power BI Dataflows and using the Power Query and that way the paths are all cloud based vs local.

Curious – do you not advise that right?

That sounds like a great solution to me.

Hi Matt, nice post.

I’ve successfully combined Excel/CSV files using most of the options you mention. Actually, it’s irrelevant from where you get the data, you just have to use Table.Combine() on the resulting tables after parsing each file (you can achieve this without making a mess by using a custom function to process the contents of each file) . The only caveat is that you need to be comfortable writing/editing M code.

Thanks Matt!

on this step, –Remove references to anything related to the local file path– I have 4 queries, Data, RawLocalFiles, RawSharepointFiles, ActiveFiles, this last is only has a Reference =Folder.Files, I have to delete al this step?

Maybe I need to reword this. The RawFiles queries can and should have absolute reference to the sources, but these are the only ones.

Hi Matt,

Nice post, I think your preferred option is the best.

Regarding the Web.Contents method I believe it is technically possible to achieve file combine (or equivalent) but its messy. You can maintain an excel table of url links on sharepoint and use Power Automate to append to the table with a share link every time a file is added to the directory (I have briefly tested this).

From there it’s a matter of using Power Query to refresh the list table and then use a custom function to combine all files in the list.

How about a parameter that has the file path as text? I would think you could even pre-fill the list of choices and wouldn’t need a gateway.

I’ve tried that. It works (using Expression.Evaluate) but once you deploy to the service it still requires the gateway to be configured for the local files even though it is not used.

Ive definitely found that the best way to deal with slow Sharepoint file authoring is to author your pbix connecting to local files and then converting the connection to files stored on Sharepoint. Realistically the refresh isn’t too bad in the cloud unless the file enormous (most of my Sharepoint files take less than 5 mins to refresh in the cloud although I have an enormous one which takes about 40 mins in the cloud). It’s the best solution though to ensure business continuity although depending on the file structure I do also like connecting to an Outlook folder.

Hi Matt,

Your posts are awesome. However, I do think you should produce youtube videos to showcase longer and more complex posts like this one. I do think you’ll engage with your audience much more using the youtube approach.

Thanks, Daniel

Hi Matt, I don’t agree with your ‘Cons’ on Dataflows. I’m successfully using Dataflows to combine hundreds of Excel files which are stored on a SharePoint library. No issue with that. The only downside I really see with this option is that it adds another piece of complexity and it’s an additional step in the refresh cycle (1. Dataflow, 2. Dataset).

Oh, that’s interesting. So can you please confirm: you are using File Combine in Dataflows without Power BI Premium? I thought referencing one query from another query in dataflows was a premium feature.

Hi Matt,

Just jumping in here as I was going to give the same feedback as Frank.

You can successfully combine tables, but you have to disable load on the source table, and then merge/append from that in the new table.

With some delay…

Computed and linked entities are indeed PBI Premium features only (https://docs.microsoft.com/en-us/power-bi/transform-model/service-dataflows-overview#dataflow-capabilities-on-power-bi-premium). However, for combining files this is not relevant. You can merge several files (of the same/similar structure) with in one query. Have a look at the video demonstration from Curbal: https://www.youtube.com/watch?v=qdcAoZU8B8Q&t=221s