Level: Beginners

If you want to learn new skills using a new tool, then you simply must practice. One great way to practice is to weave the new tool into you daily problem solving. If you have something meaningful to do with the new tool, then you are much more likely to be motivated to practice. And the new tool I am talking about of course is Power BI.

Last week I showed how easy it is to use Power BI to help you track down large files saved in Dropbox so you could manage the overall space usage. As a result of that article, Graham Whiteman posted a comment suggesting it would be a good next step to find duplicate files. I think that is a great idea, so I decided to test it out on my PC. Read on to see how I did it, and how you can do it too.

Create a Query to Fetch All PC files

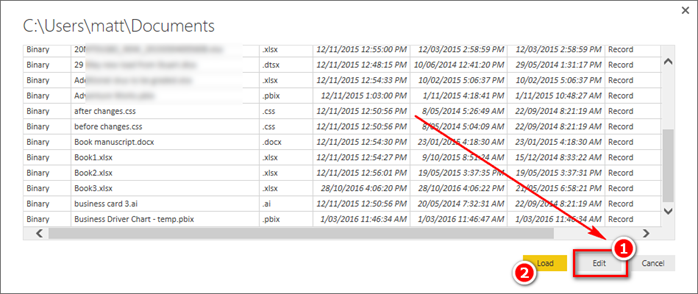

I started a new Power BI Desktop file, then connected to my PC documents folder

I immediately selected Edit query as shown in 1 below.

The only time you would immediately select Load (2 above) is if the data you are imported is already in the correct shape for Power BI.

The only columns I need are the file name, date modified, attributes and path (shown below). I Multi selected the columns I wanted to keep, then I right clicked and select “remove other columns”.

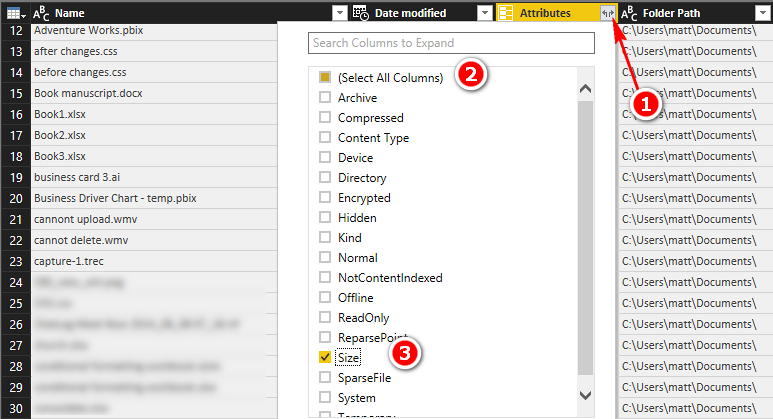

The next step was to extract the file size from the attributes list. To do this, I expanded the list of attributes (1 below), deselected all the columns and then reselected the file size (3 below).

Then I renamed the query (1 below) and changed the query so it didn’t load to Power BI by right clicking on the query and un-checking the enable load option.

This created a query that links to the PC, keeps the columns of data needed but didn’t load anything to Power BI yet.

Create a New Query that Accesses the File List

The next step was to create a new query that references the File List. I right clicked on the first query (1 below) and then selected Reference (2 below). Note how the File List query is shown in Italics indicating that it won’t load to Power BI.

The next step was to merge this data with the itself by going to the Home Ribbon and selecting Merge Queries.

In the Merge Queries dialog, I joined the list of files File List (2) with the original query File List so that it was joined to itself on 3 columns (the File Name, Modify Date and File Size) but not the File Path as shown below.

The above steps added a new column to the query. I then expanded the new column as shown below making sure to keep the original column name prefix.

Find The Duplicate Files

The second query now looked like this. As you can see in the image below, the query returned all the files (name column) along with the folder paths from the query “File List” shown as 1, and a second column containing the folder paths from the query “File List (2)” shown as 2 below.

The next step was to get rid of all rows in this query where the 2 folder paths are identical. Doing this is easy with a custom column. I added a custom column (steps 1 and 2), and wrote a formula to return TRUE if the 2 folder paths were identical.

I then filtered out everything that returned a TRUE in the new column using the filter button as shown below.

I then deleted this custom column as it was no longer needed. I just right clicked and selected remove.

Format the Number Columns

It is very important in Power BI to set the number formats before loading the data. Any numeric column that has a data type “Any” should be changed to a suitable numeric format (as shown below).

I did this, renamed the query to be called “Duplicates” and then selected “Close and Load” to get the data into Power BI.

If you want a comprehensive lesson on how to use Power Query, checkout my Power Query Online training.

Time to Write Some DAX

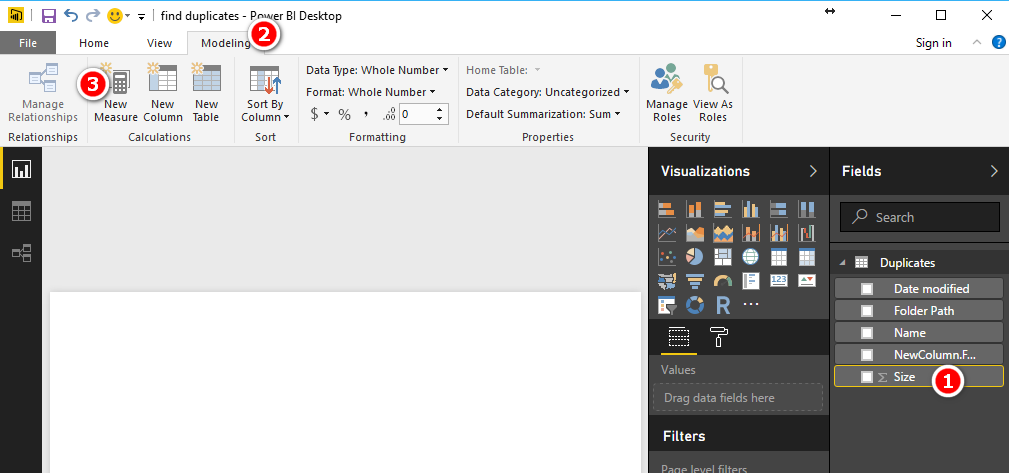

Now the data is loaded, you of course I could just drag the one or more of the columns to the Power BI canvas. But remember half the reason of doing this is to get some new skills. So instead of dragging the Size column and creating an implicit measure, I wrote some DAX – it isn’t hard to get started with such simple formulas. Here’s how to do it.

Select the Size column, go to the Modelling Ribbon and select New Measure.

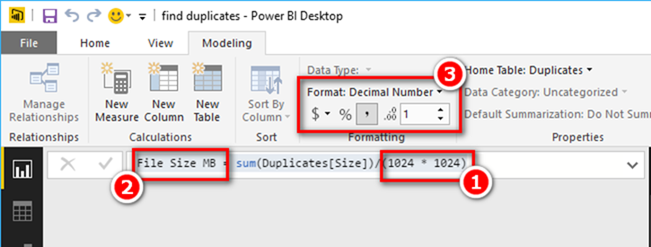

The formula I wrote is as follows

File Size MB = SUM(Duplicates[Size])/(1024 * 1024)

Note a few things that I was able to do by writing this measure myself

- I converted the units of the result from bytes to megabytes by dividing by (1024 x 1024).

- I gave the measure a more meaningful name “File Size MB”

- I was able to set the formatting to comma separated with 1 decimal place

And of course I practiced my DAX.

And the Results

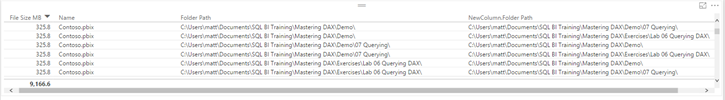

I simply then added the file size, File Name, Folder Path and Second Folder Path to a table in Power BI like shown below.

I then discovered I had around 9 GB of duplicate files on my PC. I sorted the table by File Size descending and discovered that I had multiple identical copies of a contoso.pbix. It looks above like there are 6 copies of contoso.pbix but this is deceiving. Every copy of a file will find a match with every other copy. If you note in the Folder Path column, there are only 3 unique folder paths, hence 3 files.

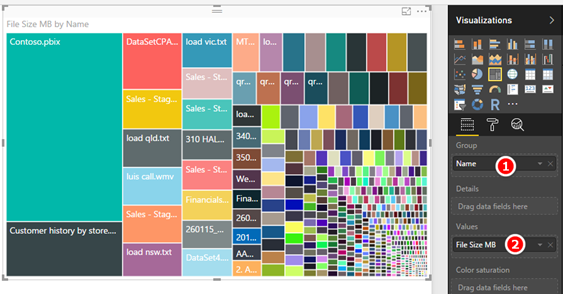

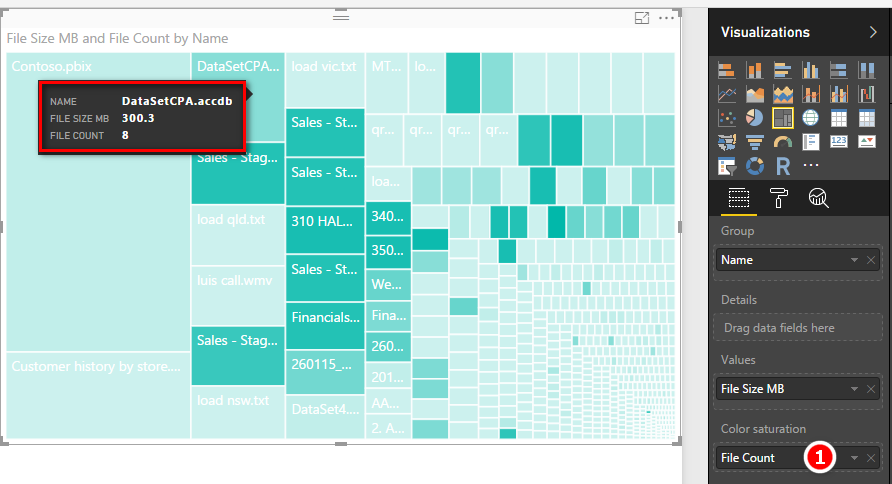

The next thing I did was add a Tree Map as shown, with the file name in the Group section and File Size MB in the Values section.

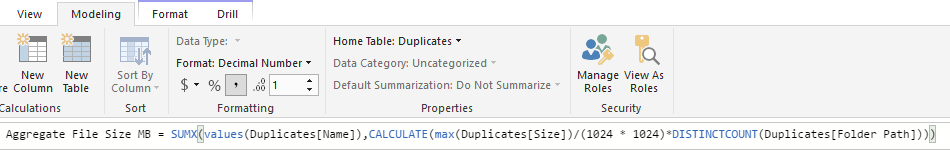

To find out accurately how many copies of each file there were, I had to write some more DAX. This formula is a bit more involved (intermediate DAX).

Let me explain this formula starting from the inside out. There are 4 functions in this DAX formula and I describe their role below.

- SUMX is an iterator. It iterates over a table specified as the first parameter (VALUES in this case). You can read more about SUMX here.

- The VALUES function returns a table of unique file names (in this case it is unique values in the column Duplicates[Name]). So SUMX above will iterate over each file name in the name column.

- SUMX is iterating over a Virtual Table (VALUES). The CALCULATE is required to force context transition.

- Then for each file name in the table (in 2 above), DISTINCTCOUNT will count how many unique folder names there are.

I then added the new File Count measure to the Colour Saturation section of the Tree Map (1 below). This does 2 things. Firstly it shows the high folder count files as being a darker colour, and secondly it adds the file count to the tool tips (visible when you hover the mouse over the visual).

And Now Some Fun

I’ve been looking for an excuse to do this for some time. I want to find the fattest fish in my pond (aka most space taken by file name). I went to http://visuals.powerbi.com and downloaded the Enlighten Aquarium custom visual.

https://app.powerbi.com/visuals/show/Aquarium1442671919391

I then imported the custom visual into Power BI Desktop

The I copied my Tree Map visual (Ctrl-c, Ctrl-v), selected the copy and changed the visualisation to be the Aquarium. This visual is showing the largest individual files regardless of location or how many copies. I am not saying this is the best way to visualise data, but surely it is one of the most creative.

Here is my final workbook canvas

For the purists out there, I wrote a new file size formula as follows.

The original formula I wrote double counts the file size when there are multiple duplicates. The above formula is almost identical to the File Count I explained above. The only difference really is the inclusion of MAX(Duplicates[Size]). This is a “trick” to handle the fact that for each file name there will be multiple records in the data model. Each file will have the exact same file size, so by selecting MAX I simply get to access the file size. I could have used any other aggregator (eg Min, Avg, Sum) and got the same outcome.

I haven’t shared the actual workbook here. The whole idea is for you do try this yourself so you get 3 benefits; more disk space, some practice with Power BI Desktop and have some fun.

Good info. Lucky me I reach on your website by accident, I bookmarked it.

I really like your writing style, superb info , thanks for putting up : D.

Real informative and fantastic body structure of subject matter, now that’s user friendly (:.

Thank you for the good writeup. It in fact was a amusement account it. Look advanced to far added agreeable from you! By the way, how can we communicate?

Some really interesting information, well written and generally user genial.

I love it when people come together and share opinions, great blog, keep it up.

My husband and i have been so relieved that Edward managed to finish up his research from your ideas he discovered when using the web page. It’s not at all simplistic just to continually be making a gift of helpful tips which others might have been trying to sell. And we realize we have the writer to appreciate for this. The specific illustrations you have made, the simple website navigation, the friendships your site help to instill – it is mostly sensational, and it’s making our son and us reason why this subject matter is pleasurable, and that’s tremendously vital. Thank you for all the pieces!

Amazing blog! Do you have any tips for aspiring writers? I’m planning to start my own blog soon but I’m a little lost on everything. Would you recommend starting with a free platform like WordPress or go for a paid option? There are so many options out there that I’m totally confused .. Any tips? Bless you!

amei este site. Para saber mais detalhes acesse nosso site e descubra mais. Todas as informações contidas são informações relevantes e exclusivas. Tudo que você precisa saber está está lá.

fabuloso este conteúdo. Gostei bastante. Aproveitem e vejam este site. informações, novidades e muito mais. Não deixem de acessar para descobrir mais. Obrigado a todos e até a próxima. 🙂

This is a very good tips especially to those new to blogosphere, brief and accurate information… Thanks for sharing this one. A must read article.

you are really a good webmaster. The site loading speed is amazing. It seems that you are doing any unique trick. Furthermore, The contents are masterwork. you have done a excellent job on this topic!

As soon as I noticed this site I went on reddit to share some of the love with them.

Some genuinely interesting details you have written.Assisted me a lot, just what I was looking for : D.

Hi Matt,

When it comes to extracting properties of files, in addition to the file size, reated date, modified date, etc. can it be possible to get the “author” as well also by using Power Query?

Thanks,

Mmmm, it would appear not. I think you can do this with VBA, but I guess that is not the same. You could request the feature at ideas.powerbi.com

I got it. Thanks for your reply and advices.

Simply Amazing..! I am not aware of one can browse folder and extract metadata of folder structure.. Great..!

Good finding for me ..!

Thanks

Hi

A very useful tutorial ! Thanks

One question : which tool are you using to provide pictures with symbol and the animation gif ?

I use fastsone capture. Bought it about 10 years ago. Best $20 I have ever spent in software.

Very useful, thanks Matt

Awesome!

This is a very well-structured, content-rich and beautifully presented tutorial. A real opportunity to learn some very useful PQ, DAX and PBI techniques.

Thanks a lot Matt