Level: Intermediate

I spent a lot of last week helping to configure Power BI in preparation for go live for a client. One of the important things to do when designing a Power BI solution is to make sure you have a good design for your user security access. Today I am going to share the approach I used to track user security configuration with Active Directory Groups.

This post is more about Power Query than it is about Active Directory, so even if you don’t have this specific scenario, it is still worth reading this blog post to see how I dealt with some tricky issues in cleaning data that was extracted into a text file using Power Query.

Using Active Directory Groups

I don’t want to go into a lot of detail here, but in short, we set up dashboards and reports targeted at different groups of users. Rather than assign users access directly to the dashboards in Power BI (by adding their email addresses one by one), a much better solution is to create an Active Directly Group, assign the users to the active directory group using the normal business process, and then assign the AD group to the Dashboard in Power BI.

Note that you must assign an email address to the Active Directory group to be able to do this.

The benefit of this approach is that you can have a single corporate process for assigning user access, and this is normally Active Directory (for medium to large companies).

The Problem – How to Keep Track of Which Users Have Access

If you are an Active Directory Administrator, then I am sure you have all sorts of tools to work out who has what access. But let me tell you – I don’t have those tools (or don’t know about them anyway), and I quickly found out it was a pain to try to check user access when you don’t have the right tools.

Command Line Tricks to Check Users in an AD Group

A quick question around the office, and Lester told me how to run a command line request to find out which users are in a group. To do this:

- Open a new command prompt on your PC (that is connected to the company network of course).

- type the following command

net group /domain "GROUP NAME IN INVERTED COMMAS"

The above command line string will send a list of all users assigned to the group to the screen like shown below

Checking One Group at a Time is a Pain too

This was great, but soon I realised I wanted to get ALL the groups I wanted, and then look at the data in Excel. I didn’t want to have to go to the command line each time, so I developed the following process to create a simple to use Excel file. This is a summary of the steps I took to achieve this outcome.

- Created a batch file that ran the command line code for all the groups I needed to interrogate (one after the other), and then sent the results of each of these commands to a single text file.

- Imported and cleansed the data in the file to Excel using Power Query

- Set up some slicers on top of my final Excel table to make it easy to access the information I needed.

This is what I ended up with

Below I step through the details on how to complete each step to achieve this final report.

Step 1. Move the Manual Code into a Batch File

This is easy, even if you haven’t done it before

- Open notepad.exe and paste the first line of code that I showed above.

- I appended some more code at the end of the line to tell the command line to send the results to a file (instead of sending the results to the screen).

Note I added a single > symbol pointing to the name of the output file in the first line of code below. - Then I duplicated the first line of code multiple times (cut and paste), once for each group I needed – then changed the group name of course. Also note that the > symbol is a >> for lines 2+ in the batch file (> means send the data to a new file, >> means append the data to an existing file). I had many more groups in real life.

net group /domain "FINCUBE GROUP" > c:\users\allingtm\Documents\list.txt net group /domain "FINCUBE ALL" >> c:\users\allingtm\Documents\list.txt net group /domain "FINCUBE ADHOC" >> c:\users\allingtm\Documents\list.txt

Now save the text file with a *.bat extension in an easy to find location (note, first change the file type filter in Notepad to be *.* instead of *.txt). From the command line, execute the batch file by typing the name of the file (including the full path and excluding the bat extension). The command line will then chug away and produce the output file with all the Active Directory information needed. This is what the final file output looks like in a txt file (sample of test data only of course). In my real scenario there were many more names and many more groups which is of course exponentially harder to manage manually than this simple test data.

Step 2. Import into Excel Using Power Query

I am not going to go through every step I used in Power Query, one at a time here as this is an intermediate post. I have however attached the workbook at the bottom of the page – download it and take a look if you want more details about each step in the transformation. I am just going to call out some of the more interesting things I had to do to get the final output.

The Default File Output is not Very Excel Friendly

The first thing to note is that this output is great for a command line screen, but not very good for Excel. So you have to deal with these issues in Power Query the best you can. It is easy to deal with blank lines and dotted lines etc with a simple filter. Just deselect anything that is not of value for the task at hand as shown below.

All of the lines I have deselected (shown in the image above) are repetitive lines that are created for each of the AD Group commands in my batch file.

Once I had done this, I had a single column of text data as shown below. Note the name of the groups appear first, followed by one or more rows of user names. This pattern is then repeated down the page. Each row of names has 1 or more user names per row – tricky!!

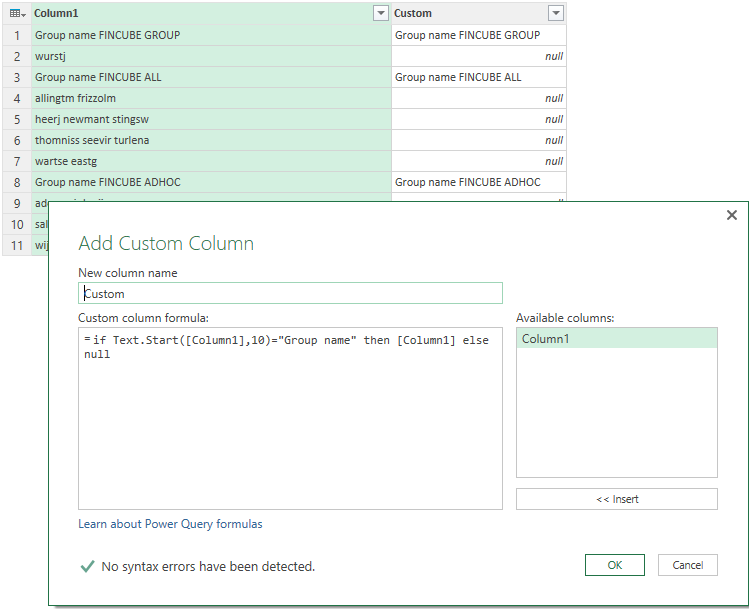

I then added a new custom column in Power Query by hand writing an IF formula. An If formula in Power Query Language (PQL) is in the format

if something1 = something2 then something3 else something4

Note PQL is case sensitive for the formulas and the text it is looking at.

See my formula below along with the output in a new custom column.

Basically I am checking the first column to see if the row is a Group Name or a list of User Names. If it is a Group Name, then return that Group Name. If it is a User Name, then return null. The reason null is required is important – it is then easy to copy the group name down the list as shown below

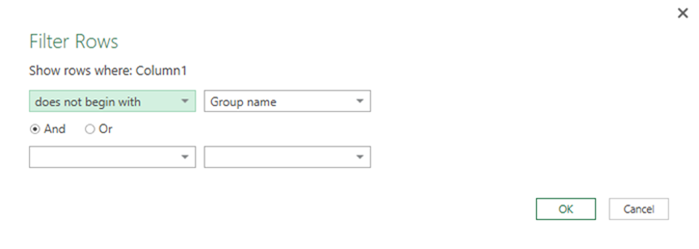

Now that every row has a group name as part of the row record, it is now OK to go ahead and delete every row in the first column that starts with the text “Group name”. I did this with a filter rather than manually selecting each row – that way it is future proof if I add new group names down the track.

Remember everything is case sensitive, so typing “group name” would fail here as my data uses “Group name”.

Split the User Names into Columns

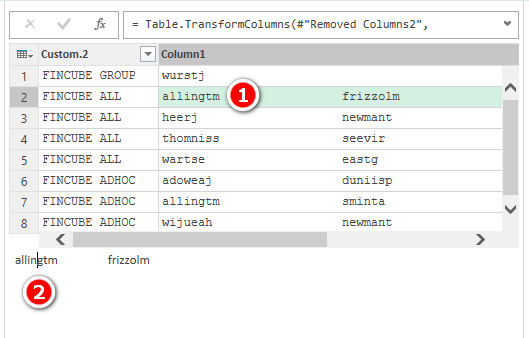

This is what I have now (after some reorg). Time to split the users into columns.

This looked easy, but there was a surprise waiting for me. When I split on “space” repeatedly, I discovered the number of spaces between each name varied by line – anywhere from around 17 spaces up to about 21 spaces in total. This stumped me – how was I going to iterate over this file and remove a seemingly random number of spaces to parse out the names? I gave up and went to lunch.

During a nice kebab with my family, I had a brain wave – I wondered if the file may have been a fixed width file format, adding more or less spaces depending on the number of characters in each user name. I then remembered an article (Chris Webb I think) talking about the new monospaced font feature in Power Query – just what I needed. As soon as I got home, I found the setting for the mono spaced font and turned it on – BAM! My hunch was correct.

It wasn’t until later that I realised that I should have known this from looking at the original text file – oh well.

I then selected one of the cells of user names (shown as 1 below) and then selected the results pane (shown as 2 below). Once the cursor is in the results pane, I used my arrow keys to move the cursor left and right to count how many characters made up each “column” of user names (25 as it turned out).

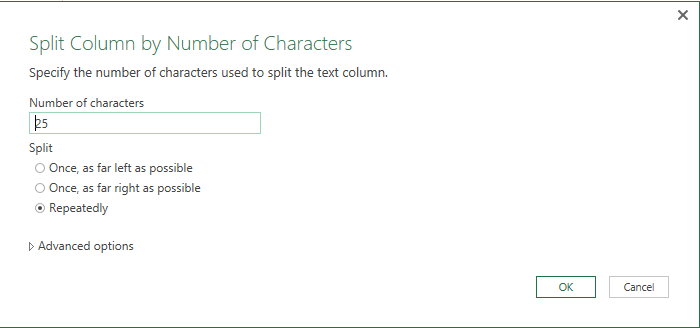

So I applied a “split column by number of characters” step as shown below.

I then un-pivoted the resulting user name columns, trimmed out the trailing spaces, removed the columns I didn’t need and did a general clean up. This is what I then sent to a table in Excel

Step 3: Set up some slicers to make it easy to access the information

The last thing I did was add a slicer for each of the columns. This gave me a very usable tool where I could select either a group or a user name, and see the relationship between the two in the resulting filtered table. See below.

And given I had set this up using a batch file and a Power Query report, the process is infinitely repeatable with very little effort. 20 seconds to re-run the batch file, 40 seconds to refresh the Power Query workbook, and in as little as 1 minute I can have a refreshed set of data.

You can download the sample text extract, the sample batch file and my Power Query workbook here if you would like to look in more detail at the process.

If you want a comprehensive lesson on how to use Power Query, checkout my Power Query Online training.

There is perceptibly a bunch to realize about this. I think you made certain nice points in features also.

My husband and i felt quite excited when Raymond managed to do his basic research from the precious recommendations he got through the web pages. It’s not at all simplistic just to possibly be giving freely tips and hints which often others may have been trying to sell. So we remember we’ve got the writer to give thanks to because of that. Those explanations you have made, the easy web site menu, the friendships you can assist to engender – it is most wonderful, and it’s really leading our son in addition to us reason why that content is entertaining, which is certainly quite mandatory. Thanks for everything!

I happen to be writing to let you understand of the really good experience my wife’s daughter went through using your blog. She noticed several details, with the inclusion of what it is like to have a wonderful helping heart to get folks very easily thoroughly grasp a variety of impossible subject areas. You actually surpassed our expectations. I appreciate you for coming up with those productive, trustworthy, revealing not to mention cool thoughts on that topic to Mary.

I’m still learning from you, but I’m improving myself. I definitely enjoy reading everything that is posted on your site.Keep the aarticles coming. I liked it!

You should take part in a contest for one of the best blogs on the web. I will recommend this site!

Woh I like your blog posts, saved to favorites! .

Perfect piece of work you have done, this website is really cool with superb information.

You are my inhalation, I have few blogs and occasionally run out from to post .

Hey there! I’m at work surfing around your blog from my new apple iphone! Just wanted to say I love reading your blog and look forward to all your posts! Keep up the fantastic work!

Very interesting subject , thankyou for putting up.

I have been absent for some time, but now I remember why I used to love this site. Thank you, I will try and check back more frequently. How frequently you update your web site?

I’ve been exploring for a bit for any high-quality articles or blog posts on this sort of area . Exploring in Yahoo I at last stumbled upon this website. Reading this information So i’m happy to convey that I have an incredibly good uncanny feeling I discovered exactly what I needed. I most certainly will make certain to do not forget this site and give it a glance regularly.

Great post. I am facing a couple of these problems.

Appreciate it for this post, I am a big fan of this internet site would like to proceed updated.

My brother recommended I would possibly like this website. He was entirely right. This put up actually made my day. You cann’t imagine simply how much time I had spent for this info! Thank you!

A formidable share, I simply given this onto a colleague who was doing slightly analysis on this. And he in reality purchased me breakfast because I found it for him.. smile. So let me reword that: Thnx for the treat! But yeah Thnkx for spending the time to discuss this, I really feel strongly about it and love reading more on this topic. If doable, as you turn into experience, would you thoughts updating your weblog with more particulars? It’s highly helpful for me. Massive thumb up for this weblog publish!

This is a very good tips especially to those new to blogosphere, brief and accurate information… Thanks for sharing this one. A must read article.

Hello! I just would like to give a huge thumbs up for the great info you have here on this post. I will be coming back to your blog for more soon.

Enjoyed reading this, very good stuff, thanks. “Shared joys make a friend, not shared sufferings.” by Friedrich Wilhelm Nietzsche.

I am constantly invstigating online for articles that can benefit me. Thanks!

I’m really enjoying the design and layout of your website. It’s a very easy on the eyes which makes it much more pleasant for me to come here and visit more often. Did you hire out a developer to create your theme? Outstanding work!

I’ve been exploring for a little for any high quality articles or blog posts on this kind of area . Exploring in Yahoo I at last stumbled upon this website. Reading this information So i’m happy to convey that I’ve a very good uncanny feeling I discovered just what I needed. I most certainly will make sure to do not forget this website and give it a glance regularly.

Nice read, I just passed this onto a friend who was doing a little research on that. And he actually bought me lunch since I found it for him smile Thus let me rephrase that: Thanks for lunch!

amei este site. Pra saber mais detalhes acesse nosso site e descubra mais. Todas as informações contidas são conteúdos relevantes e únicos. Tudo que você precisa saber está está lá.

amei este site. Para saber mais detalhes acesse nosso site e descubra mais. Todas as informações contidas são conteúdos relevantes e exclusivos. Tudo que você precisa saber está ta lá.

After examine a couple of of the blog posts in your web site now, and I actually like your approach of blogging. I bookmarked it to my bookmark web site listing and might be checking back soon. Pls take a look at my web page as properly and let me know what you think.

Just what I was searching for, thanks for putting up.

I besides think therefore, perfectly indited post! .

Hello. splendid job. I did not imagine this. This is a splendid story. Thanks!

Just what I was searching for, appreciate it for posting.

Can you be more specific about the content of your article? After reading it, I still have some doubts. Hope you can help me.

Matt,

Thanks for creating this article and clearly describing the steps. I found this page because I was looking for a way to extract heading text into a new column like you did with the Group Name. I’ll share my situation in case it helps someone else.

I was looking for a way to take the output from DIR C:\ /S > c:\temp\list.txt and parse it into Excel. I wanted to capture the file size and date that you don’t get with the DIR /B command. Below is my M script:

let

Source = Csv.Document(File.Contents(“C:\temp\list.txt”),[Delimiter=”#(tab)”, Columns=1, Encoding=1252, QuoteStyle=QuoteStyle.None]),

#”Filtered Rows” = Table.SelectRows(Source, each not Text.Contains([Column1], ” File(s) “) and not Text.Contains([Column1], ” Dir(s) “) and not Text.Contains([Column1], ” “) and not Text.StartsWith([Column1], ” Volume “) and [Column1] “”),

#”Added Directory” = Table.AddColumn(#”Filtered Rows”, “Directory”, each if Text.Start([Column1],14)=” Directory of ” then Text.Middle([Column1],14) else null),

#”Filled Down Directory” = Table.FillDown(#”Added Directory”,{“Directory”}),

#”Filtered Directory Rows” = Table.SelectRows(#”Filled Down Directory”, each not Text.StartsWith([Column1], ” “)),

#”Split Column into Date” = Table.SplitColumn(#”Filtered Directory Rows”, “Column1”, Splitter.SplitTextByPositions({0, 20}, false), {“Date”, “Column1.2″}),

#”Changed Type” = Table.TransformColumnTypes(#”Split Column into Date”,{{“Date”, type datetime}, {“Column1.2″, type text}}),

#”Trimmed Text” = Table.TransformColumns(#”Changed Type”,{{“Column1.2″, Text.Trim, type text}}),

#”Split Column into Size Filename” = Table.SplitColumn(#”Trimmed Text”, “Column1.2″, Splitter.SplitTextByEachDelimiter({” “}, QuoteStyle.Csv, false), {“Size”, “Filename”}),

#”Changed Type1″ = Table.TransformColumnTypes(#”Split Column into Size Filename”,{{“Size”, Int64.Type}}),

#”Split Column by Delimiter1″ = Table.SplitColumn(#”Changed Type1″, “Filename”, Splitter.SplitTextByEachDelimiter({“.”}, QuoteStyle.Csv, true), {“Filename”, “Type”}),

#”Changed Type2″ = Table.TransformColumnTypes(#”Split Column by Delimiter1″,{{“Filename”, type text}, {“Type”, type text}}),

#”Reordered Columns” = Table.ReorderColumns(#”Changed Type2″,{“Directory”, “Filename”, “Type”, “Date”, “Size”}),

#”Sorted Rows” = Table.Sort(#”Reordered Columns”,{{“Directory”, Order.Ascending}, {“Filename”, Order.Ascending}}),

ExcelLink = Table.AddColumn(#”Sorted Rows”, “ExcelLink”, each “‘=HYPERLINK(“”” & [Directory] & “\” & [Filename] & “.” & [Type] & “””, “”” & [Filename] & “””) “)

in

ExcelLink

Thanks for posting. I am not onsite with that client any more so I have no way to follow up. But thanks for sharing.

And while i’m rolling on this topic I’ll share another piece that fell into place for me today. Like you I always need to find a users NetworkID. Problem is Active Directory is the proverbial “haystack” and NetworkID the “needle”. I searched the haystack many times. I sought help from Google and even begged on a few forums. Today I came across the post below which provided the guidance I needed. NetworkID is found in the “user” object when you expand “securityPrincipal”… the field “sAMAccountName”. Here is the link:

https://social.technet.microsoft.com/Forums/office/en-US/1f55addf-8bd4-4806-af6d-79fef66268fa/power-query-active-directory-how-to-pull-a-list-of-users-email-and-network-id?forum=powerquery

Matt- sorry for the delay… never saw your question. Here are the steps I perform in Power Query:

• Use Active Directory as source & point to your domain (such as acme.com)

• Expand Object Categories

• Filter to category “group”

• Expand Group object & select “distinguishedName”

• Filter distinguishedName to find the desired group. You can parse the distinguishedName into its components but I typically just do a Text.Contains() to get what I’m after.

• Expand “Objects.group”

• Expand “Objects.group.member”

• Expand “Objects.group.member” (again)

Now you have a list of members!

Matt- I had similar challenges, but implemented a PQ only solution pretty quickly. Active Directory is a standard source in PQ. I pointed it to my customer’s domain (like acme.com), expanded the object categories, filtered to [Category]=group, expanded [Objects] then a whole trove of data appeared. To get a member list for ‘Finance%’ AD groups, I filtered [DistinguishedName] to contain “Finance” then expanded the [Objects.group], then expanded [Objects.group.member]. From there its just cleanup to get the desired output. Works great. I even share these reports with management so they can see who has access to sensitive corporate resources. Anyone that has access to AD can refresh it.

Thanks Brent. One thing I know for sure is I don’t know everything. I love it when I share something and then someone helps me improve my understanding. Thanks for your tips.

Hi Brent

I have been trying to get this to work the way you describe, but no luck. I can’t find any names of groups or names of users no matter where I go up or down the hierarchy. Any other tips?

If you use Power BI… you could use an R script to run your batch file… 🙂

R you on commission? 🙂